Self-Hosted AI vs Cloud APIs: What South African Businesses Need to Know

You’re burning R50k per month on OpenAI calls. There’s an alternative that gives you control, cuts costs, and keeps your data in South Africa.

Let me start with a number that should make you uncomfortable: R50,000 per month. That’s what a mid-size South African SaaS company I consulted with was spending on OpenAI API calls for their document processing pipeline. No fine-tuning. No customization. Just feeding customer data into a black box and hoping the invoices kept coming.

The worst part? When they asked OpenAI what model they were using on any given request, the answer was essentially “it depends.” When they asked about data residency, the answer was vague. When the rand dropped 8% against the dollar in a single week, their AI costs jumped by R4,000 overnight — with zero change in usage.

Self-hosted AI isn’t for everyone. But for the right use cases, it’s 10x cheaper and gives you control that cloud APIs never will. Here’s the breakdown.

The Cost Reality: Cloud APIs vs Self-Hosted Infrastructure

Every comparison article starts with “it depends,” and I’m not going to pretend otherwise. But let me give you actual numbers instead of hand-waving.

Cloud AI pricing looks cheap at small scale. OpenAI’s GPT-5.4 charges roughly $2.50 per million input tokens and $15 per million output tokens. Anthropic’s Claude Sonnet 4.6 charges around $3 and $15 respectively. At first glance, that’s nothing. But scale matters.

Consider a document processing workflow that handles 10,000 documents per day, each averaging 3,000 tokens input and 500 tokens output. That’s 30 million input tokens and 5 million output tokens daily. At GPT-5.4 pricing, that’s roughly $75 per day in input costs alone, plus $75 in output. Call it R22,500 per day or R675,000 per month at current exchange rates.

Now compare that to self-hosting. A single NVIDIA RTX 4090 can run Llama 3.1 8B or Mistral 7B at respectable throughput. The card costs around R48,000 to R52,000 once-off at current retail prices — AI demand has pushed stock prices up. Electricity in South Africa at R4.00 per kWh (the 2026 NERSA baseline for metro areas), running 24/7 at 450W, costs about R1,300 per month. Add in the server hardware — a decent workstation or rack server — and you’re looking at maybe R5,000 to R6,000 per month in total operating costs.

| Factor | Cloud API (GPT-5.4) | Self-Hosted (Llama 3.1 8B) |

|---|---|---|

| Monthly Cost (10k docs/day) | R83,000+ | R6,000 (infra only) |

| Cost Per Token | R0.000046 | R0 (after hardware payback) |

| Hardware Cost | N/A | R48,000 – R52,000 |

| Electricity (SA rates) | N/A | R1,300/month |

| Maintenance Overhead | None | 5-10 hrs/month |

| Data Leaves SA? | Yes — US servers | No — on-premise |

Of course, these numbers shift depending on scale. If you’re processing 100 documents a month, cloud APIs are the obvious choice. The break-even point for most self-hosted AI workloads sits somewhere around 5,000 to 10,000 API calls per day, depending on model size and complexity.

The rand-to-dollar exchange rate is a hidden tax on every AI API call. When the rand weakens, your AI budget gets cut — but your workload doesn’t shrink.

And then there’s the load shedding factor. South African businesses can’t rely on grid power for uninterrupted operation. Your self-hosted infrastructure needs UPS backup, generator capacity, or a hosting provider with local data centers that has power redundancy sorted. Factor that into your total cost of ownership.

Control and Data Privacy: What Actually Matters

Cost isn’t the only reason to consider self-hosted AI. For South African businesses in regulated industries, it might be the only viable option.

When you use a cloud AI API, your data transits to and from their servers. For OpenAI, that means US data centers. For most other providers, the same applies. Your customer data, financial records, legal documents, medical records — they all leave South African jurisdiction.

POPIA Compliance

South Africa’s Protection of Personal Information Act (POPIA) places strict requirements on how personal data is processed and stored. While POPIA doesn’t explicitly ban sending data offshore, it requires:

- Informed consent — You must tell users their data goes to US servers

- Adequate protection — The offshore country must have comparable data protection

- Contractual safeguards — Binding agreements with the processor

- Right to object — Users can object to offshore processing

For most companies, this means either a lengthy legal review of every AI provider’s terms of service, or simply keeping the data on-premise. The second option is faster, cheaper, and more defensible in an audit.

Worth noting: South Africa’s Draft National AI Policy (published April 2026) signals that the government intends to fold AI governance directly under Section 71 of POPIA, which specifically handles automated decision-making. Even though the initial draft was withdrawn for revisions, it confirms the regulatory direction: AI systems that make decisions about people will face stricter scrutiny. Self-hosted AI gives you a head start on compliance.

Industries Where Data Sovereignty Is Non-Negotiable

- Financial Services: Banks, insurers, and fintech companies handle sensitive financial data subject to FSCA regulations

- Healthcare: Patient records under the National Health Act cannot be casually exported

- Legal: Attorney-client privilege doesn’t lose its weight because an API call happened

- Government: State organs are bound by strict data classification policies

Model Customisation and Control

Control also means the ability to fine-tune, customise, and adapt. Cloud APIs give you whatever model version they decide to run that day. OpenAI has silently swapped model versions, changed output quality, and introduced new safety layers that affect business-critical outputs — all without opt-out options for API customers.

Self-hosted models are yours. You choose the version. You control the parameters. You can fine-tune on your own data without sharing that data with anyone. When Mistral releases a new model, you evaluate it on your terms and deploy it on your schedule.

Control isn’t about paranoia. It’s about predictability. When your AI output drives business decisions, you need to know exactly what model produced it and why.

There’s also the uptime argument. Cloud APIs have outages. OpenAI has experienced multiple incidents where API availability dropped to single-digit percentages for hours. If your workflow depends on AI processing — document ingestion, customer support bots, real-time analysis — a cloud outage means your business stops. Self-hosted infrastructure, properly configured with redundancy, keeps running.

When Cloud APIs Actually Win

I’ve spent the last thousand words making the case for self-hosting. Now let me be honest about where cloud APIs are the better choice — because pretending otherwise would be dishonest.

Prototyping and Experimentation

When you’re validating a concept, spending weeks on infrastructure setup is counterproductive. Spin up a cloud API, prove the concept, then decide on deployment strategy.

Low-Volume Workloads

If you’re making fewer than 5,000 API calls per day, cloud pricing is almost always cheaper than maintaining your own hardware. Don’t over-engineer a solution to a small problem.

Bleeding-Edge Models

GPT-5.5, Claude Opus 4.7, Gemini Ultra — these models are years ahead of what runs on a single consumer GPU. For tasks requiring frontier intelligence, cloud APIs are the only option.

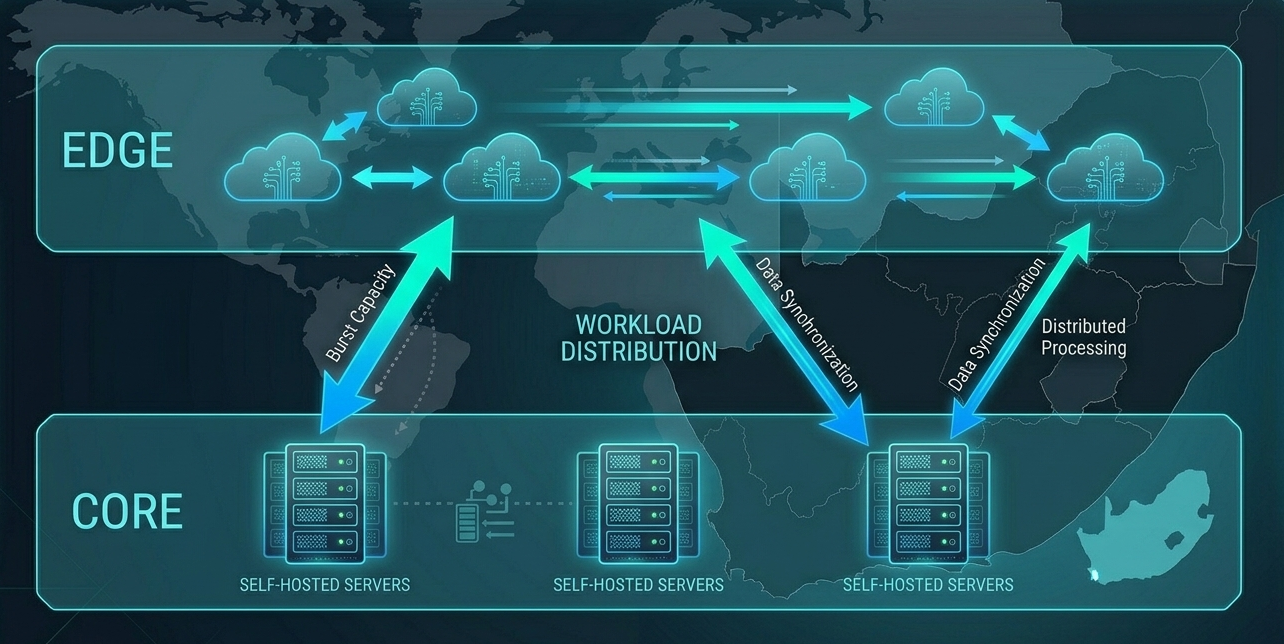

The hybrid approach is where most businesses should land. Self-host your core AI workloads — document processing, customer support, internal search — where volume is high and data sensitivity matters. Use cloud APIs for experimentation, edge cases, and tasks requiring the most capable models available.

This is exactly the architecture we use at NemesisNet. Our AI development services start with a hybrid assessment: what’s your volume, what’s your sensitivity profile, and what models do you actually need? The answer is rarely “all cloud” or “all self-hosted.”

Infrastructure Requirements: What You Actually Need

Self-hosted AI sounds great on paper. But what does it actually take to set up and run? Let me break it down by tier.

Entry-Level: Single Consumer GPU

An NVIDIA RTX 4090 (24GB VRAM) can run models up to 13B parameters comfortably with quantisation. This handles chat and conversational AI workloads, document summarisation and extraction, code generation for internal tools, and text-to-speech engines like Kokoro or Piper.

You’ll need a machine with at least 32GB RAM, a modern CPU, and fast NVMe storage. Total hardware cost: roughly R80,000 to R120,000. Software: Ollama, vLLM, or LM Studio for local serving. All free and well-documented.

What a Single RTX 4090 Can and Can’t Do

Can Do: 7B-13B parameter models at 30+ tokens/sec, batch processing of 50-100 documents/hour, concurrent serving for small teams (5-10 users), fine-tuning with LoRA adapters.

Can’t Do: 70B+ parameter models (insufficient VRAM), real-time processing at 100+ concurrent users, complex RAG pipelines with large vector stores, multi-modal inference at scale.

Production: Multi-GPU Setup

For production workloads, you need more than one GPU. A typical production setup scales from a single consumer card to a multi-GPU cluster with enterprise storage and monitoring.

| Component | Entry-Level | Production |

|---|---|---|

| GPU | 1x RTX 4090 | 2-4x RTX 4090 or A6000 |

| RAM | 32GB DDR5 | 128GB+ ECC |

| Storage | 1TB NVMe | 4TB+ NVMe RAID |

| Serving | Ollama / LM Studio | vLLM + load balancer |

| Monitoring | None | Prometheus + Grafana |

| Est. Monthly Cost | R6,000 | R18,000 – R35,000 |

The Skills Gap

Here’s the uncomfortable truth: setting up a GPU server is the easy part. Running it in production — handling model updates, monitoring performance, managing failures, scaling capacity — requires specialised skills. Most South African IT teams don’t have a “self-hosted AI engineer” on staff.

This is where managed services come in. At NemesisNet, we handle the infrastructure layer so your team focuses on the application. Our self-hosted AI services include deployment, monitoring, and ongoing maintenance — because the value is in the AI, not in babysitting GPU servers.

The real cost of self-hosted AI isn’t the hardware. It’s the operational knowledge required to keep it running. Budget for skills, not just silicon.

The Hybrid Path: The Best of Both Worlds

Most South African businesses shouldn’t go all-in on either approach. The hybrid model gives you the cost benefits of self-hosted infrastructure with the flexibility of cloud APIs when you need them.

Here’s how we architect it in practice:

Core Layer (Self-Hosted)

- Document processing and extraction

- Customer support AI

- Internal search and knowledge base

- Any workload processing sensitive data

Edge Layer (Cloud API)

- Prototyping new features

- Bleeding-edge model capabilities

- Low-volume, high-complexity tasks

- Failover when self-hosted is at capacity

This pattern aligns well with the MCP (Model Context Protocol) architecture that’s gaining traction. Your self-hosted models handle the bulk of the work, while cloud APIs serve as a specialist layer for tasks that require capabilities beyond what your hardware can run.

The hybrid approach also gives you a natural migration path. Start with everything in the cloud. As volume grows and costs climb, migrate the highest-volume workloads to self-hosted infrastructure. Keep cloud APIs for experimentation and edge cases. This way, you’re never making a binary bet — you’re evolving your infrastructure as your needs change.

Conclusion: Make the Decision Based on Numbers, Not Hype

Self-hosted AI isn’t a religion. Cloud APIs aren’t evil. The right choice depends on your volume, your sensitivity requirements, and your team’s capabilities.

Here’s the bottom line:

- Self-host when: You’re processing more than 5,000 calls daily, data privacy matters, you need offline capability, or you want to control your AI stack long-term

- Use cloud APIs when: You’re prototyping, running low-volume workloads, or need frontier models that consumer hardware can’t run

- Go hybrid when: You want the best of both worlds — which is most businesses

South African businesses face unique challenges: dollar-denominated API costs, unreliable power, POPIA compliance requirements, and connectivity issues. These challenges don’t make AI impossible — they make self-hosted AI a strategic advantage for the companies willing to invest in it.

Ready to Explore Self-Hosted AI?

We help South African businesses evaluate, deploy, and manage self-hosted AI infrastructure. Whether you need a full infrastructure assessment or a proof-of-concept deployment, our team can help you make the right decision for your specific use case.